Abstract

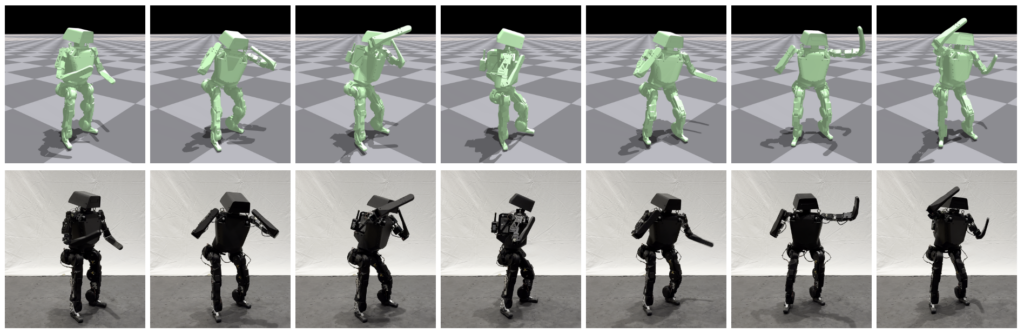

Recent advancements in generative motion models have achieved remarkable results, enabling the synthesis of lifelike human motions from textual descriptions. These kinematic approaches, while visually appealing, often produce motions that fail to adhere to physical constraints, resulting in artifacts that impede real-world deployment. To address this issue, we introduce a novel method that integrates kinematic generative models with physics based character control. Our approach begins by training a reward surrogate to predict the performance of the downstream non-differentiable control task, offering an efficient and differentiable loss function. This reward model is then employed to fine-tune a baseline generative model, ensuring that the generated motions are not only diverse but also physically plausible for real-world scenarios. The outcome of our processing is the Robot Motion Diffusion Model, a text-conditioned kinematic diffusion model that interfaces with a reinforcement learning-based tracking controller. We demonstrate the effectiveness of this method on a challenging humanoid robot, confirming its practical utility and robustness in dynamic environments.

Additional Content

Copyright Notice

The documents contained in these directories are included by the contributing authors as a means to ensure timely dissemination of scholarly and technical work on a non-commercial basis. Copyright and all rights therein are maintained by the authors or by other copyright holders, notwithstanding that they have offered their works here electronically. It is understood that all persons copying this information will adhere to the terms and constraints invoked by each author’s copyright. These works may not be reposted without the explicit permission of the copyright holder.