Abstract

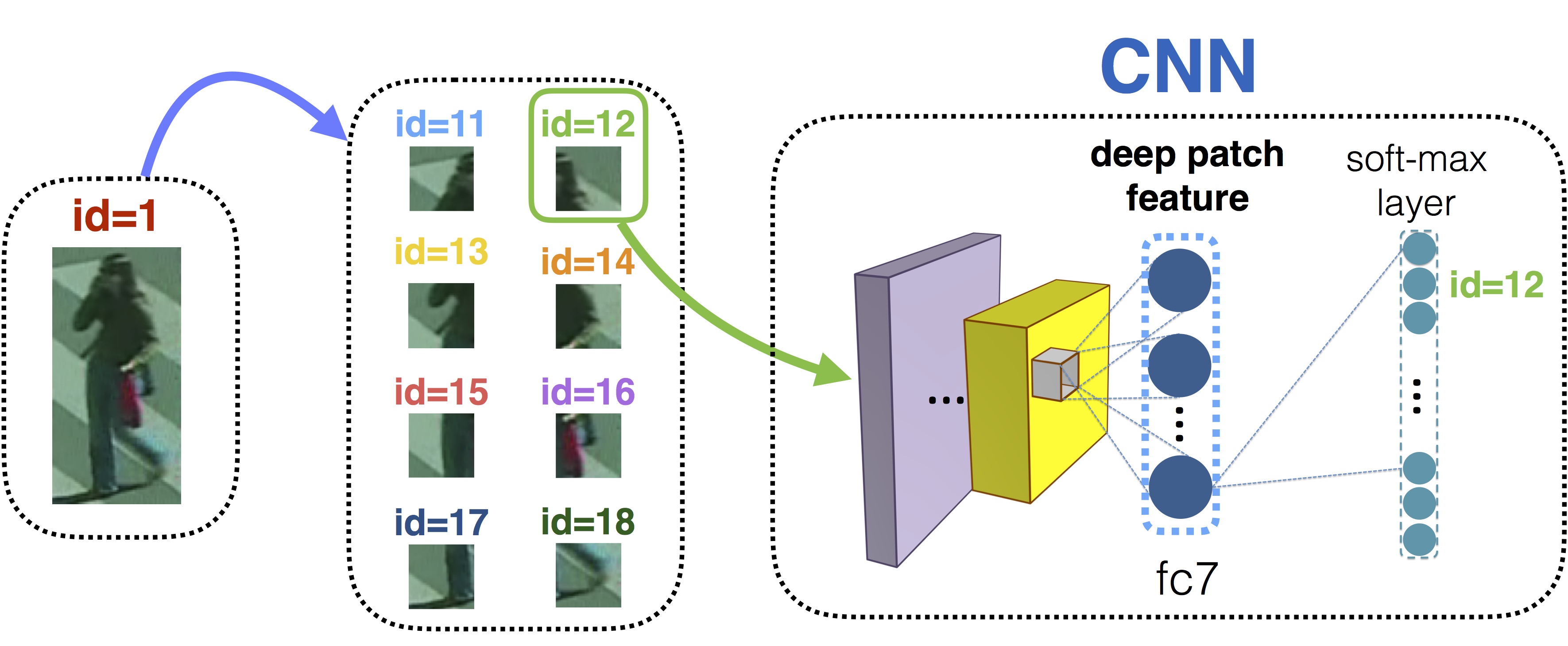

The methodology for finding the same individual in a network of cameras must deal with significant changes in appearance caused by variations in illumination, viewing angle and a person’s pose. Re-identification requires solving two fundamental problems: (1) determining a distance measure between features extracted from different cameras that copes with illumination changes (metric learning); and (2) ensuring that matched features refer to the same body part (correspondence). Most metric learning approaches focus on finding a robust distance measure between bounding box images, neglecting the alignment aspects. In this paper, we propose to learn appearance measures for patches that are combined using deformable models. Learning metrics for patches avoids strong dimensionality reduction, thus keeping more information. Additionally, we allow patches to change their locations, directly addressing the correspondence problem. As patches from different locations may share the same metric, our method effectively multiplies the amount of training data and allows patch metrics to be learned on the smaller amounts of labeled images. Different metric learning approaches (KISSME, XQDA, LSSL) together with different deformable models (spring constraints, one-to-one matching constraints) are investigated and compared. For describing patches, we propose to learn a deep feature representation with Convolutional Neural Networks (CNNs), thus obtaining highly effective features for re-identification. We demonstrate that our approach significantly outperforms state-of-the-art methods on multiple datasets.

Copyright Notice

The documents contained in these directories are included by the contributing authors as a means to ensure timely dissemination of scholarly and technical work on a non-commercial basis. Copyright and all rights therein are maintained by the authors or by other copyright holders, notwithstanding that they have offered their works here electronically. It is understood that all persons copying this information will adhere to the terms and constraints invoked by each author’s copyright. These works may not be reposted without the explicit permission of the copyright holder.